Generative artificial intelligence (AI) is everywhere. To some, it’s the next industrial revolution, comparable to the printing press, the lightbulb, pasteurized milk, the automobile, the next best thing since sliced bread.

Its usefulness is demonstrated not only in the tech industry from which it hails, but also in policing, education and business, among nearly every other industry where technology is used. Its creators speak about it with almost messianic attributions of divinity, whether it is our saving grace, our inevitable future or our demise.

The technology has captured the attention of the Trump Administration, while in the private sector, companies compete to stay ahead of the race in this enormously profitable industry.

To be sure, the applications of AI are demonstrably far-reaching. In less than a decade, generative AI has been trained to write like us, speak like us, draw, plan, sort, read and analyze like us. It can shape our thinking and do our bidding in ways that seem like nothing short of magic.

But unlike magic – which creates something from nothing – AI cannot create something from nothing. AI products, though intangible, do have a connection to the physical world.

It’s a connection that scientists and researchers say is making a significant impact on the environment.

But do its environmental and fiscal costs outweigh the benefit of its usage? That’s not an easy question to answer, especially when the current form of the technology is in its relative infancy.

To explore this question, we asked experts in environmental and computer science fields to share what they know about the technology, as well as both their concerns and their more optimistic outlooks. Additionally, we explored how the cool, water and land-rich environment of the Great Lakes region might make it the perfect spot for the very infrastructure that enables the AI boom.

What is Generative Artificial Intelligence?:

One Einstein vs. the GPU

Though we’ve described AI as a new technology, the truth is that it isn’t as new as it appears. Using a machine to compute (hence the word “computer”) came about with its namesake’s invention. The difference now is that a computer can do much more computing, more quickly and more efficiently.

Dr. Jake Lee is an associate professor and chair of the Department of Computer Science at Bowling Green State University (BGSU). As someone who works intimately with the technology, he explains generative AI as it exists now. He said generative AI – such as ChatGPT – is a technology built on top of existing AI tech, and accomplishes its tasks by computing probabilities.

“Generative AI can understand what we are telling them by understanding the sequence of the words they (the users) are putting in. And then they use heavy probabilities to understand what we’re saying. So the difference is natural language processing is built on top of the typical, or the family of AI technology.”

In other words, it “understands” what you’re saying, unlike a traditional computer that responds only to specific commands. That’s because large-language models (LLM) are designed after the human mind – the super computer in between your ears.

“AI algorithms basically mimic the human brain, the neurons and other things in the human brain,” Lee said. “But in the computer, we have to mimic it, and that requires a lot of computation.”

He said that computers prior to the year 2000 were largely doing computation on something called a central processing unit, or CPU, while the graphics processing unit, or GPU, was used mainly for screen displays. Today, however, that’s changed: computer scientists are able to directly program onto a GPU.

But what is the difference between these two things, andwhy does that matter?

“The CPU is like an Einstein brain,” Lee said, referring to esteemed physicist Albert Einstein. “There are four or six of them in a computer. A graphics card (GPU) is maybe like a person like me, or regular people, but on one graphics card, there are 3,000, 4,000 of us.”

The metaphor supposes that although Einstein was a once-in-a-generation genius, he was only one person. A GPU, by comparison, resembles the brains of thousands of people, working together all at once. Arguably, Lee says, with much more power than a single Einstein.

“There’s so much power here in one chip. In order to mimic the human brain’s activities in the machine-learning algorithm, or the AI algorithm, we connect many of these GPUs,” he said.

Computers, though they sometimes feel that way, aren’t magic. There will always be a real-world tradeoff, becauseall of this computational processing comes at a price: enormous amounts of energy.

Heating Up, Cooling Down:

How AI uses so much energy

Have you ever felt your computer get warm after a long session of using an intensive kind of software, like a video editor or graphics-heavy video game? That’s because it’s using energy, and energy – according to the properties of thermodynamics – cannot be created or destroyed. It must go somewhere.

In this case, your computer’s processor is working hard and consuming energy, which in turn produces heat. In a laptop or desktop, an internal fan might kick on to mitigate some of the stress on the machine and prevent its internal components from melting.

But generative AI and other online services – which rely on processing on a larger level – aren’t stored in your personal computer. When it comes to computer processing on this scale, much more than an internal fan is needed to keep the machinery cool.

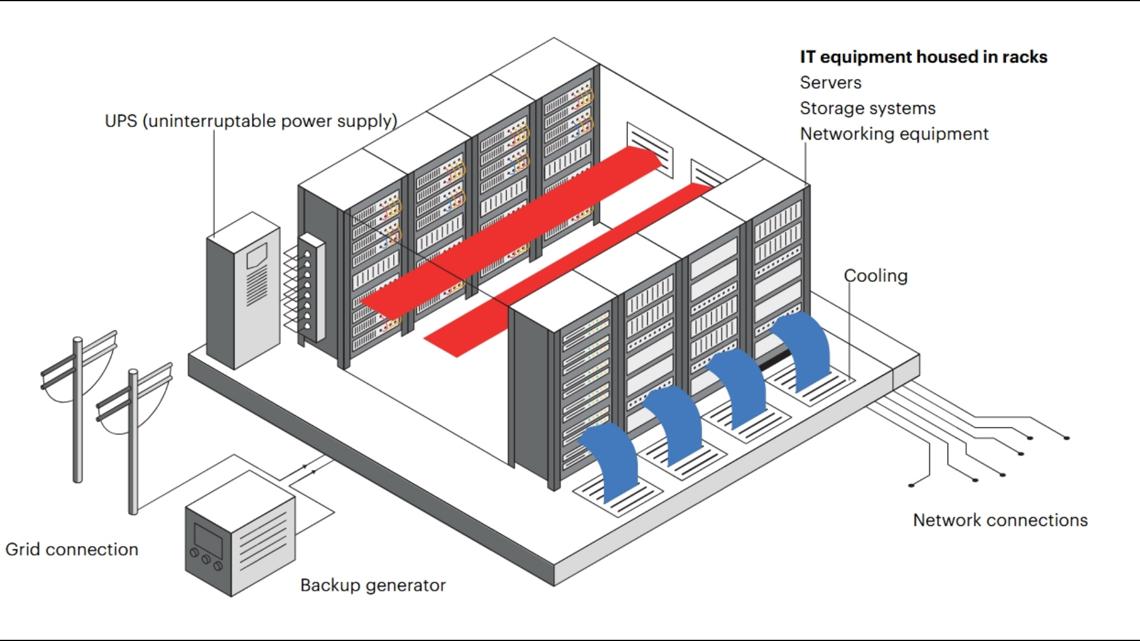

Let’s say you make a Google search, play Fortnite or ask ChatGPT to write you an email. This query or command is electronically passed to a data center that houses servers. These require energy to function, usually in the form of electricity, much as your home computer does.

But as the servers complete each task, they also – just like a video game console or laptop – generate heat.

And when servers heat up, they must be cooled down in order to continue functioning. While air conditioning is an option, that too generates its own heat and can be astronomically expensive, especially if you’re cooling servers in thousands of data centers across the country.

While data centers aren’t anything new, energy requirements increase exponentially when it comes to artificial intelligence.

Remember those 3,000 to 4,000 brains against the singular Einstein brain? Let’s extend that metaphor: Place each of those brains each into a person’s head, and imagine they are working together in an office, or a factory floor. Yes, they will be more productive than our friend Albert Einstein constructing theories of relativity on his own, but the thousands will need more food and water than the singular Mr. Einstein. They will, by virtue of breathing and moving and metabolizing, generate more heat. They will eventually need air conditioning to keep them comfortable.

The same can be said about the thousands of GPUs working on thousands of servers in thousands of data centers around the country. They require extraordinary amounts of energy. That much energy means more heat. More heat means more cooling.

So if AC isn’t an option, how are these servers cooled? The answer is something humans – and now machines – can’t live without: Water.

Going green (or blue):

The Great Lakes equation

It might seem counterintuitive to use water to cool electronics (two things that famously don’t mix), but the process, called “once-through cooling” is fairly common. And while it’s much more energy efficient than using air conditioning to cool the machinery, it also has environmental impacts.

Here’s how once-through cooling works, according to theU.S. Energy Information Administration:

“In a once-through system, intake structures withdraw water, which is then run through the power plant for cooling. Once used, the water is discharged back into the body of water at a higher temperature. Both the water intake and thermal discharge can affect local aquatic wildlife.”

In other words, the water, once cool, returns to its original body of water at a higher temperature, having absorbed the heat generated by electronics.

Laws of thermodynamics demand the heat must go somewhere, and in this case, it goes into the water – a lot of it. The Associated Pressreported in Augustthat larger data centers can consume up to 5 million gallons of water a day, which is roughly the same as the daily water demand for a town of up to 50,000 people, or slightly more than the entire population of the city of Findlay.

At the local level, this situates the Great Lakes region in an interesting position. Dr. Timothy Pape, an assistant professor of Environmental Science at BGSU, described how companies get the water needed to cool their servers, as well as how the cooling process works.

“A lot of different data centers pump water into their processing server’s infrastructure, and that often comes from the city water grid,” he said. “That’s something that has to be considered from a design perspective: how much water can that area provide to a data center so that it can cool down the servers?… It can, depending on the size of the data center, be quite a large resource that’s needed.”

According to NOAA, the Great Lakes contain 21% of the world’s fresh water supply. In addition to the region’s ample water supply, locations with cooler weather are also targets. Though northwest Ohio has some scorchers in the summer, winters here are far cooler than the southern and western parts of the country.

With all the water in theGreat Lakes region and the cooler climate, Pape said it seems likely companies in the tech industry would pursue the Great Lakes as a good place to build their data centers.

“I think that’s a likely scenario,” Pape said, when asked if companies would select the Great Lakes for data centers. “The country of Ireland has a lot of data centers because it has some similar climates and resource dynamics as the Great Lakes, and so Ireland has grown its data center footprint quite substantially, which has led to questions about energy usage within the country.”

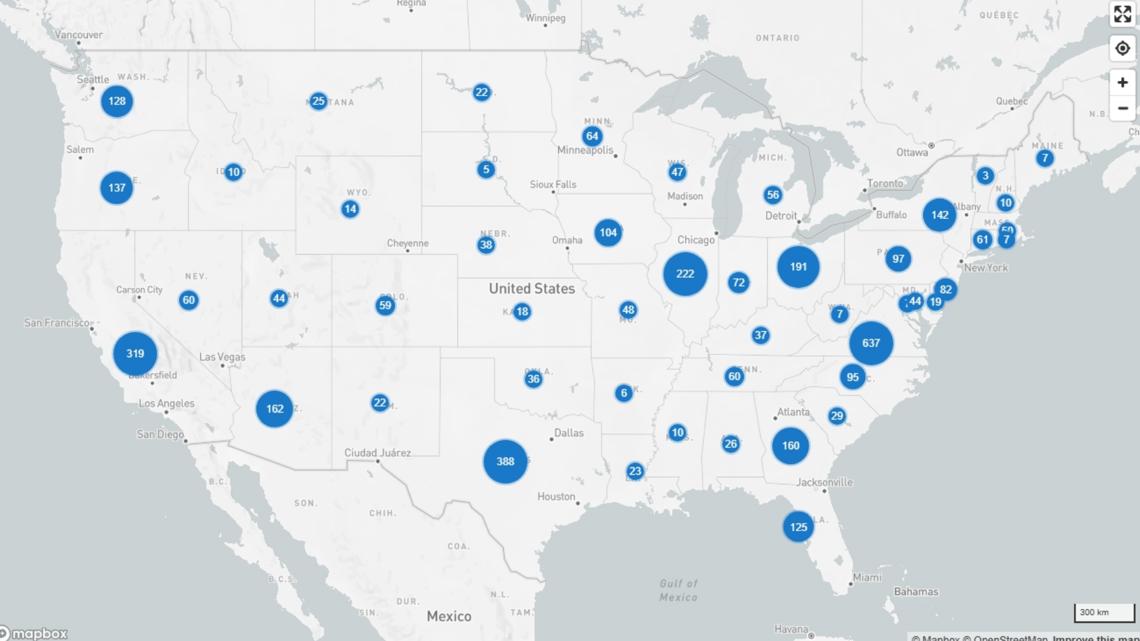

According to Data Center Map, a website that catalogs data centers around the world, there are 123 data centers in Ireland. In the United States, there are 4,049 data centers. The United States is almost 117 times larger than Ireland; according to its square mileage, Ireland has one data center for every 265 square miles of land, while in the United States, that figure comes in at one data center per every 999 square miles.

But data centers aren’t evenly distributed along those figures, of course. Most of the data centers in the United States are in Virginia – a whopping 637 of them. Though there are large numbers in other states, as well.

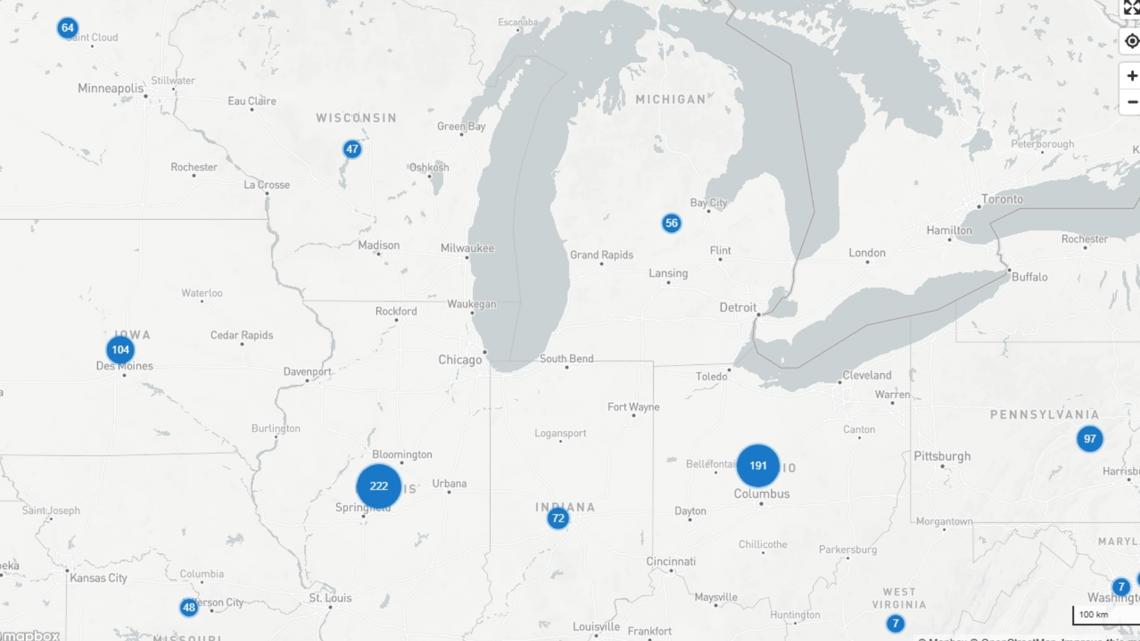

Below is a state-by-state breakdown of data centers in the Great Lakes region:

- Ohio: 191 data centers

- Michigan: 52 data centers

- Illinois: 222 data centers

- Indiana: 72 data centers

- Wisconsin: 47 data centers

- Minnesota: 64 data centers

Ohio specifically, which is about 1.4 times larger than Ireland, has a similar proportion of data centers per square mile to the Emerald Isle: about 234. It’s clear the Buckeye State, with its sprawling, flat land and easy access to huge quantities of water, has the eye of data center manufacturers for that very reason.

But how much water is too much? At least for now, Pape said the Great Lakes region doesn’t face significant and regular water scarcity issues, so the potential for water overuse by data centers in northwest Ohio isn’t his primary concern.

A potentially larger problem at hand is energy usage, he said.

“Make no mistake, a data center requires a lot of electricity,” said Pape. “So suddenly a region [with data centers] is going to have a higher electricity sort of requirement on it than it perhaps had before.”

Water, water everywhere:

Will the Great Lakes become the next AI hub?

- “Water, water, every where / Nor any drop to drink” –The Rime of the Ancient Mariner (1798)

Ohio, already the site of nearly 200 data centers, will be home to several others in the near future in northwest Ohio.

A data center is slated to be built in the city of Oregon withtalks for a second one underway. Meanwhile, Meta is building a center of its ownin Wood County, with plans to become operational in 2027.

Western Lucas County communities, such as Monclova and Spencer townships, have expressed interest in the building data centers in the area, the Toledo Free Press reported in August.

It’s the region’s cooler climate, flat land and ample water reserves that make it especially attractive to developers and tech companies. And it’s the revenue they bring with them that makes them desirable for communities.

“There could be an influx of people who want to be in the Great Lakes region to help build this technology center that some people are envisioning, but additionally any more people, any more industry is going to require more resources,” said Pape. “We don’t want to tell industries not to come here, but we want to be able to manage these changes in a thoughtful way.”

But while these companies seem to be eyeing the Great Lakes region for data centers, and leadership from the municipal to the state level is eager to reap the economic benefits, not everyone is happy. In addition to concerns about the environmental load of the facilities, some are concerned about the strain it might put on the water supply and electrical grid.

In Lucas County’s Richfield Township, a proposed zoning change would have paved the way for “advanced manufacturing,” a category that includes data centers and research facilities. After community members voiced their objections, township officials rescinded the amendmentthat would have allowed it.

Returning to Wood County, Middleton Township trustees withdrew a request to rezone two parcels of farmland for industrial use near the in-development Meta data center after residents voiced concerns about potential impacts to their quality of life, as well as water, noise and light usage.

But local leaders stress the importance of the economic development these data centers bring as the AI industry booms. Oregon City Council projects the data center couldbring over $1 billion to the economy, which would boost revenue for city schools. It would also, according to a local union leader, create as many as 600 to 800 jobs.

It’s Electric:

The state of our electricity and the demands of data centers and AI

EDITORS’ NOTE: This section of the article has been updated to include a statement from FirstEnergy.

Concerns about the electrical impacts of data centers are not unfounded, according to energy experts. And it’s not just the energy-hungry data centers themselves; it’s how they’re being used.

AI, as previously established, requires more energy than other technologies. TheInternational Energy Association reported that AI queries actually require more energy than other uses of electricity. It said that the typical demand of a Google search (without AI, which it has since implemented into its features) is 0.3 Wh (watt hours) of electricity, while a request using ChatGPT requires 2.9 Wh.

“Considering 9 billion searches daily, this would require almost 10 TWh (terawatt hours) of additional electricity in a year,” the organization wrote in the report.

IEA estimated that global electricity consumption from data centers is projected to double by 2030, and account for just under 3% of the entire planet’s total consumption by the same year. Growth specifically driven by AI adoption is projected to come in at about 30% per year.

But do we have enough electricity to support these ventures? The Associated Press reported in August that some data centers could require more electricity than cities the size of Cleveland or Pittsburgh. Like water, electricity is a finite resource. And that resource comes at a cost.

Traditionally, transmission costs are distributed among classes of consumers, but with the unprecedented amount of energy the data centers need as the U.S. competes for global dominance in AI, evidence suggests it’s responsible for increasing costs.

At least locally in Ohio, this cost may be going to the companies operating the data centers instead of the consumer, after the Public Utilities Commission of Ohioapproved a measure giving the American Electric Power the ability to start charging data centers differently for electricity usage, the Ohio Capital Journal reported on Sept. 11, 2025.

But this decision can still be taken to Ohio’s Supreme Court for an appeal. Some have already said they plan to appeal it.

As to whether the electrical demands of data centers are cost-prohibitive, or indeed, sustainable, that remains to be seen.

WTOL 11 reached out to FirstEnergy, which distributes electricity in northwest Ohio, to ask them if its power grids were able to handle the demands of data centers, or if it anticipates an increase in costs to consumers. A spokesperson for the energy company responded with the following statement:

“With more than $28 billion in planned investments through 2029, FirstEnergy is modernizing our transmission and distribution infrastructure to meet the evolving demands of the grid – especially in response to high-load growth from AI, data centers and advanced manufacturing,” said Hannah Catlett, FirstEnergy’s communication representative for northwest Ohio.

“FirstEnergy’s strategic investment in northwest Ohio’s electric grid is powering more than just infrastructure, it’s fueling economic growth and unlocking new opportunities for energy-intensive industries across the region,” Catlett continued. “With major upgrades, like the new high-voltage transmission infrastructure in Fulton, Lucas and Wood counties, it’s an exciting time to do business here. And the benefits don’t stop there, this work strengthens reliability for thousands of residents and small businesses, making it a win for the entire community.”

Lessening the Impact:

What are ways tech companies could make data centers more green?

With AI likely here to stay and data centers showing no sign of slowing down, innovators are searching for more environmentally friendly ways to create and employ the technology. The IEA said renewable energy remains the fastest-growing source of electricity for data centers, with growth for these industries forecast.

Some companies are seeking out environmentally friendly alternatives to the massive amount of energy data centers require. Recently, Metasigned a 20-year dealto secure nuclear power to help with the growing demands of AI. Though nuclear power produces waste, it does not emit carbon dioxide or other greenhouse gases.

Pape also stressed the importance of renewable energy sources when it comes to AI and data centers.

“If it (energy) is being generated through wind turbines, that maybe is a cleaner form of artificial intelligence processing happening in those data centers. If it’s through coal power plants, that’s much more environmentally impactful, not only from a climate perspective, but just from an air quality as well.”

Lessened environmental impacts might also come in the form of how the technology is used, not just how it is powered. Reducing this environmental impact might come in the form of load balancing: sending AI requests to a more carbon-neutral data center to lessen its environmental impact.

“A lot of artificial intelligence tools these days don’t necessarily push the data processing to your closest data server or your closest data center, and so it actually will send it to some other region in the world that’s potentially not having as much data processing happening at that exact moment,” he said.

You might, then, be in Toledo while sending a query to ChatGPT, but the data center that processes it is located in, for example, Ireland. Or vice versa.

This capacity of the technology could be used to mitigate some of its environmental toll, Pape suggested. He referenced a recent paper written by researchers at the University of California Riverside that suggested intentional and deliberate load balancing could make data centers more equitable and less taxing.

The researchers claim AI’s environmental footprint has and will be disproportionately higher in certain regions, raising what they call social-ecological justice concerns; that is, a data center will be more environmentally taxing in some areas than others.

“The idea is that we could actually send the data process to places in the world where there are less chances of environmental harms,” Pape said.

In practice, this might mean that a region affected by a drought or a heat wave could turn off or limit itself until more favorable weather conditions return. Any requests, meanwhile, would be offloaded at other data centers in a region that could handle it.

But this would require cooperation on a global scale.

“Implementing this at scale seems very difficult,” he said. “To some degree, there’d have to be a lot of buy-in from corporations and from governments.”

Another alternative might come in the form of where data centers are built. That might mean building in a colder, more water-rich environment. It might mean not even building them on land.

“They (tech companies) are currently testing data centers, for example, underwater to see if that’s even possible,” Lee added. “If we are building it, we need to make sure that we can actually sustain it.”

Lee said he was optimistic about the journey of generative artificial intelligence. The technologies, he said, occupy a lot less space than computers of the past, and they are much more efficient as well.

“So there is a concern,” Lee said. “But I believe that people will find a solution. That’s what we do, typically.”

Ultimately, however, Pape said the extent of the environmental impact might come down to consumer choice.

“When we foreground environmental impacts for any kind of practice, technology, things like that, it then puts on the consumer a bit of a choice, right? Rather than saying you have to use AI tools for everything you do in life; that would take away the autonomy of consumer choice.”

Warm machines on a warming planet:

Could AI be the key to solving global warming?

AI is often pitched as a way to make humans more productive, faster and efficient at everything they do. Some see it as a brainstorming or problem-solving tool, or something to help you come up with ideas you may not have previously considered.

So if this machine is as powerful as 3,000 to 4,000 human brains, could it help solve the very problem it is exacerbating?

To Pape, this seems unlikely: AI is good at synthesizing data and making predictions based on it. But everything it knows and understands is based on solutions humans have already posited.

“Any tool that allows you to come at a problem or come at an issue or a topic in a more substantial, sophisticated manner, seems like a valuable one, but it’s not a panacea,” Pape said. “At the end of the day, and this is, I think, a key to this, that much of what AI will do, especially a chatbot, is say, ‘This is the historical reference. These are the things that have happened. I’m taking this data, synthesizing it, giving it to you in a way that will help you answer the question you’re looking at.'”

Perhaps it is through one of AI’s unique abilities – processing data and information far faster than a human being can, as well as predictive methods – that headway can be made against the march toward an irreversibly hotter planet. In a race against time and temperature, AI can gather, sort and analyze data, expediting the important processes of what otherwise might be a hugely time-consuming task for scientists.

In fact, scientists are already using AI to increase the efficiency in their studies of climate change, studies that ultimately are used to inform policy and environmentally-friendly practices.

For example, a2023 study that employed the use of machine learningpredicted a more precise timeframe for when the Earth is expected to reach the 1.5 degrees Celsius mark – the threshold of global temperature increase called for in the 2015 Paris climate agreement. The Associated Press reported that the scientists used AI to produce a number of scenarios in which global warming could be kept below a 2-degree Celsius increase by 2054 – much earlier than other studies, which offered a prediction of reaching the same threshold in the 2090s.

There are studies showing AI can monitor the melting rate of Antarctic icebergs, predict areas prone to deforestation and even learn to reduce its own carbon emissions and impact depending on energy needs. It can analyze weather dataand compare it to historical climate trends, as well as monitor cloud cover for solar power production. It could even help companies reduce waste by improving design cycles for tech products and machinery.

But the machine isn’t magic. It won’t do these things on its own. In the end, a human solution is likely going to be required to solve a human problem when it comes to climate issues.

In short, Pape asserts,if we haven’t actually solved global climate change, thinking AI will be able to provide us with the perfect answer is “unfortunately unrealistic.”